https://en.wikipedia.org/wiki/Flea#Egg

https://en.wikipedia.org/wiki/Phosphor

Consciousness after death is a common theme in society and culture in the context of life after death. Scientific research has established that the physiological functioning of the brain, the cessation of which defines brain death, is closely connected to mental states. However, many believe in some form of life after death, which is a feature of many religions.

https://en.wikipedia.org/wiki/Consciousness_after_death

--------------------------------------------------------------------------------------------------------------

https://www.sciencedaily.com/releases/2020/09/200903114214.htm

https://www.forbes.com/sites/fernandezelizabeth/2020/09/06/is-consciousness-continuous-like-a-movie-or-discrete-like-a-flipbook/?sh=76801d131013

https://www.youtube.com/watch?v=WnoIf2NwaRY

https://qz.com/663729/new-research-suggests-that-consciousness-is-developed-in-two-stages

https://elifesciences.org/articles/23871

https://www.google.com/url?sa=t&rct=j&q=&esrc=s&source=web&cd=&ved=2ahUKEwir7bfix5D9AhXijIkEHSBWBzk4ChAWegQIERAB&url=https%3A%2F%2Fgrazianolab.princeton.edu%2Fdocument%2F183&usg=AOvVaw0Pyvs6Hsplapn00kx-vJd8

https://anthrosource.onlinelibrary.wiley.com/journal/15563537

https://www.sciencedirect.com/book/9780128009482/the-neurology-of-consciousness

https://www.google.com/url?sa=t&rct=j&q=&esrc=s&source=web&cd=&ved=2ahUKEwi-voPgyJD9AhU0lYkEHRt8AUw4FBAWegQICRAB&url=https%3A%2F%2Fwww.pnas.org%2Fdoi%2F10.1073%2Fpnas.2024455119&usg=AOvVaw27_asx2sKtWwAvR9COFKAT

https://webspace.ship.edu/cgboer/jamesselection.html

https://www.google.com/url?sa=t&rct=j&q=&esrc=s&source=web&cd=&ved=2ahUKEwiJicCmyZD9AhV5lYkEHZHICng4PBAWegQICRAB&url=https%3A%2F%2Fresearchoutreach.org%2Farticles%2Fconsciousness-quantum-mechanics-plancks-constant%2F&usg=AOvVaw2K1_a7ZTCVGJdVZV_3afOI

------------------------------------------------------------------------------------------------------------

https://en.wikipedia.org/wiki/Biogerontology

https://en.wikipedia.org/wiki/Information-theoretic_death

https://en.wikipedia.org/wiki/Senescence

https://en.wikipedia.org/wiki/Holonomic_brain_theory

https://en.wikipedia.org/wiki/Holonomic_brain_theory

https://en.wikipedia.org/wiki/Electromagnetic_theories_of_consciousness

https://en.wikipedia.org/wiki/Artificial_consciousness

https://en.wikipedia.org/wiki/Binding_problem

https://en.wikipedia.org/wiki/Quantum_mind

https://en.wikipedia.org/wiki/Secondary_consciousness

https://en.wikipedia.org/wiki/Sentience

https://en.wikipedia.org/wiki/Soul

https://en.wikipedia.org/wiki/Stream_of_consciousness_(psychology)

https://en.wikipedia.org/wiki/Subconscious

https://en.wikipedia.org/wiki/Subjectivity

https://en.wikipedia.org/wiki/Unconscious_mind

https://en.wikipedia.org/wiki/Unconsciousness

https://en.wikipedia.org/wiki/Visual_masking

https://en.wikipedia.org/wiki/Animal_consciousness

https://en.wikipedia.org/wiki/Cartesian_theater

https://en.wikipedia.org/wiki/Subconscious

https://en.wikipedia.org/wiki/Unconscious_mind

https://en.wikipedia.org/wiki/Dual_consciousness

https://en.wikipedia.org/wiki/Divided_consciousness

https://en.wikipedia.org/wiki/Secondary_consciousness

https://en.wikipedia.org/wiki/Stream_of_consciousness_(psychology)

https://en.wikipedia.org/wiki/Category:Consciousness

https://en.wikipedia.org/wiki/Category:Devices_to_alter_consciousness

https://en.wikipedia.org/wiki/Feraliminal_Lycanthropizer

https://en.wikipedia.org/wiki/Brain_implant

https://en.wikipedia.org/wiki/Dreamachine

https://en.wikipedia.org/wiki/MKUltra

https://en.wikipedia.org/wiki/Sonic_weapon

https://en.wikipedia.org/wiki/Hypersonic_weapon

https://en.wikipedia.org/wiki/Scramjet

https://en.wikipedia.org/wiki/Category:Insurgency_weapons

https://en.wikipedia.org/wiki/Category:Space_weapons

https://en.wikipedia.org/wiki/Category:Railway_weapons

https://en.wikipedia.org/wiki/Category:Police_weapons

https://en.wikipedia.org/wiki/Category:Weapon_operation

https://en.wikipedia.org/wiki/Category:Non-lethal_weapons

https://en.wikipedia.org/wiki/Category:Energy_weapons

https://en.wikipedia.org/wiki/Category:Crew_served_weapons

https://en.wikipedia.org/wiki/Category:Flexible_weapons

https://en.wikipedia.org/wiki/Category:Fortification_weapons

https://en.wikipedia.org/wiki/Category:Weapon_guidance

https://en.wikipedia.org/wiki/Category:Guided_weapons

https://en.wikipedia.org/wiki/Category:Personal_weapons

https://en.wikipedia.org/wiki/Category:Paramilitary_weapons

https://en.wikipedia.org/wiki/Category:Mythological_weapons

https://en.wikipedia.org/wiki/Category:Weapons_countermeasures

https://en.wikipedia.org/wiki/Weaponry_(radio_program)

https://en.wikipedia.org/wiki/Psychoacoustics

https://en.wikipedia.org/wiki/Psychophysics

History

Many of the classical techniques and theories of psychophysics were formulated in 1860 when Gustav Theodor Fechner in Leipzig published Elemente der Psychophysik (Elements of Psychophysics).[5]

He coined the term "psychophysics", describing research intended to

relate physical stimuli to the contents of consciousness such as

sensations (Empfindungen). As a physicist and philosopher,

Fechner aimed at developing a method that relates matter to the mind,

connecting the publicly observable world and a person's privately

experienced impression of it. His ideas were inspired by experimental

results on the sense of touch and light obtained in the early 1830s by

the German physiologist Ernst Heinrich Weber in Leipzig,[6][7]

most notably those on the minimum discernible difference in intensity

of stimuli of moderate strength (just noticeable difference; jnd) which

Weber had shown to be a constant fraction of the reference intensity,

and which Fechner referred to as Weber's law. From this, Fechner derived

his well-known logarithmic scale, now known as Fechner scale. Weber's and Fechner's work formed one of the bases of psychology as a science, with Wilhelm Wundt

founding the first laboratory for psychological research in Leipzig

(Institut für experimentelle Psychologie). Fechner's work systematised

the introspectionist approach (psychology as the science of

consciousness), that had to contend with the Behaviorist approach in

which even verbal responses are as physical as the stimuli.

Detection

An

absolute threshold is the level of intensity of a stimulus at which the

subject is able to detect the presence of the stimulus some proportion

of the time (a p level of 50% is often used).[16]

An example of an absolute threshold is the number of hairs on the back

of one's hand that must be touched before it can be felt – a participant

may be unable to feel a single hair being touched, but may be able to

feel two or three as this exceeds the threshold. Absolute threshold is

also often referred to as detection threshold. Several different methods are used for measuring absolute thresholds (as with discrimination thresholds; see below).

Discrimination

A difference threshold (or just-noticeable difference,

JND) is the magnitude of the smallest difference between two stimuli of

differing intensities that the participant is able to detect some

proportion of the time (the percentage depending on the kind of task).

To test this threshold, several different methods are used. The subject

may be asked to adjust one stimulus until it is perceived as the same as

the other (method of adjustment), may be asked to describe the

direction and magnitude of the difference between two stimuli, or may be

asked to decide whether intensities in a pair of stimuli are the same

or not (forced choice). The just-noticeable difference (JND) is not a

fixed quantity; rather, it depends on how intense the stimuli being

measured are and the particular sense being measured.[17] Weber's Law states that the just-noticeable difference of a stimulus is a constant proportion despite variation in intensity.[18]

In discrimination experiments, the experimenter seeks to determine at

what point the difference between two stimuli, such as two weights or

two sounds, is detectable. The subject is presented with one stimulus,

for example a weight, and is asked to say whether another weight is

heavier or lighter (in some experiments, the subject may also say the

two weights are the same). At the point of subjective equality (PSE),

the subject perceives the two weights to be the same. The

just-noticeable difference,[19] or difference limen (DL), is the magnitude of the difference in stimuli that the subject notices some proportion p of the time (50% is usually used for p in the comparison task). In addition, a two-alternative forced choice

(2-afc) paradigm can be used to assess the point at which performance

reduces to chance on a discrimination between two alternatives (p will then typically be 75% since p=50% corresponds to chance in the 2-afc task).

Absolute and difference thresholds are sometimes considered

similar in principle because there is always background noise

interfering with our ability to detect stimuli.[6][20]

https://en.wikipedia.org/wiki/Psychophysics

Classical psychophysical methods

Psychophysical

experiments have traditionally used three methods for testing subjects'

perception in stimulus detection and difference detection experiments:

the method of limits, the method of constant stimuli and the method of

adjustment.[22]

Method of limits

In

the ascending method of limits, some property of the stimulus starts

out at a level so low that the stimulus could not be detected, then this

level is gradually increased until the participant reports that they

are aware of it. For example, if the experiment is testing the minimum

amplitude of sound that can be detected, the sound begins too quietly to

be perceived, and is made gradually louder. In the descending method of

limits, this is reversed. In each case, the threshold is considered to

be the level of the stimulus property at which the stimuli are just

detected.[22]

In experiments, the ascending and descending methods are used

alternately and the thresholds are averaged. A possible disadvantage of

these methods is that the subject may become accustomed to reporting

that they perceive a stimulus and may continue reporting the same way

even beyond the threshold (the error of habituation).

Conversely, the subject may also anticipate that the stimulus is about

to become detectable or undetectable and may make a premature judgment

(the error of anticipation).

To avoid these potential pitfalls, Georg von Békésy introduced the staircase procedure

in 1960 in his study of auditory perception. In this method, the sound

starts out audible and gets quieter after each of the subject's

responses, until the subject does not report hearing it. At that point,

the sound is made louder at each step, until the subject reports hearing

it, at which point it is made quieter in steps again. This way the

experimenter is able to "zero in" on the threshold.[22]

Method of constant stimuli

Instead

of being presented in ascending or descending order, in the method of

constant stimuli the levels of a certain property of the stimulus are

not related from one trial to the next, but presented randomly. This

prevents the subject from being able to predict the level of the next

stimulus, and therefore reduces errors of habituation and expectation.

For 'absolute thresholds' again the subject reports whether they are

able to detect the stimulus.[22] For 'difference thresholds' there has to be a constant comparison stimulus with each of the varied levels.

Friedrich Hegelmaier described the method of constant stimuli in an 1852 paper.[23] This method allows for full sampling of the psychometric function, but can result in a lot of trials when several conditions are interleaved.

Method of adjustment

In

the method of adjustment, the subject is asked to control the level of

the stimulus and to alter it until it is just barely detectable against

the background noise, or is the same as the level of another stimulus.

The adjustment is repeated many times. This is also called the method of average error.[22]

In this method, the observers themselves control the magnitude of the

variable stimulus, beginning with a level that is distinctly greater or

lesser than a standard one and vary it until they are satisfied by the

subjective equality of the two. The difference between the variable

stimuli and the standard one is recorded after each adjustment, and the

error is tabulated for a considerable series. At the end, the mean is

calculated giving the average error which can be taken as a measure of

sensitivity.

Adaptive psychophysical methods

The

classic methods of experimentation are often argued to be inefficient.

This is because, in advance of testing, the psychometric threshold is

usually unknown and most of the data are collected at points on the psychometric function

that provide little information about the parameter of interest,

usually the threshold. Adaptive staircase procedures (or the classical

method of adjustment) can be used such that the points sampled are

clustered around the psychometric threshold. Data points can also be

spread in a slightly wider range, if the psychometric function's slope

is also of interest. Adaptive methods can thus be optimized for

estimating the threshold only, or both threshold and slope.

Adaptive methods are classified into staircase procedures (see below)

and Bayesian, or maximum-likelihood, methods. Staircase methods rely on

the previous response only, and are easier to implement. Bayesian

methods take the whole set of previous stimulus-response pairs into

account and are generally more robust against lapses in attention.[24] Practical examples are found here.[21]

Staircase procedures

Diagram

showing a specific staircase procedure: Transformed Up/Down Method (1

up/ 2 down rule). Until the first reversal (which is neglected) the

simple up/down rule and a larger step size is used.

Staircases usually begin with a high intensity stimulus, which is

easy to detect. The intensity is then reduced until the observer makes a

mistake, at which point the staircase 'reverses' and intensity is

increased until the observer responds correctly, triggering another

reversal. The values for the last of these 'reversals' are then

averaged. There are many different types of staircase procedures, using

different decision and termination rules. Step-size, up/down rules and

the spread of the underlying psychometric function dictate where on the

psychometric function they converge.[24]

Threshold values obtained from staircases can fluctuate wildly, so care

must be taken in their design. Many different staircase algorithms have

been modeled and some practical recommendations suggested by

Garcia-Perez.[25]

One of the more common staircase designs (with fixed-step sizes)

is the 1-up-N-down staircase. If the participant makes the correct

response N times in a row, the stimulus intensity is reduced by one step

size. If the participant makes an incorrect response the stimulus

intensity is increased by the one size. A threshold is estimated from

the mean midpoint of all runs. This estimate approaches, asymptotically,

the correct threshold.

Bayesian and maximum-likelihood procedures

Bayesian

and maximum-likelihood (ML) adaptive procedures behave, from the

observer's perspective, similar to the staircase procedures. The choice

of the next intensity level works differently, however: After each

observer response, from the set of this and all previous

stimulus/response pairs the likelihood is calculated of where the

threshold lies. The point of maximum likelihood is then chosen as the

best estimate for the threshold, and the next stimulus is presented at

that level (since a decision at that level will add the most

information). In a Bayesian procedure, a prior likelihood is further

included in the calculation.[24]

Compared to staircase procedures, Bayesian and ML procedures are more

time-consuming to implement but are considered to be more robust.

Well-known procedures of this kind are Quest,[26] ML-PEST,[27] and Kontsevich & Tyler's method.[28]

Magnitude estimation

In

the prototypical case, people are asked to assign numbers in proportion

to the magnitude of the stimulus. This psychometric function of the

geometric means of their numbers is often a power law

with stable, replicable exponent. Although contexts can change the law

& exponent, that change too is stable and replicable. Instead of

numbers, other sensory or cognitive dimensions can be used to match a

stimulus and the method then becomes "magnitude production" or

"cross-modality matching". The exponents of those dimensions found in

numerical magnitude estimation predict the exponents found in magnitude

production. Magnitude estimation generally finds lower exponents for the

psychophysical function than multiple-category responses, because of

the restricted range of the categorical anchors, such as those used by Likert as items in attitude scales.[29]

See also

https://en.wikipedia.org/wiki/Psychophysics

https://en.wikipedia.org/wiki/Stevens%27s_power_law

https://en.wikipedia.org/wiki/Just-noticeable_difference

https://en.wikipedia.org/wiki/Two-alternative_forced_choice

https://en.wikipedia.org/wiki/Response_bias

https://en.wikipedia.org/wiki/Choice_set

https://en.wikipedia.org/wiki/Attentional_shift

https://en.wikipedia.org/wiki/Task_switching_(psychology)

https://en.wikipedia.org/wiki/drift

https://en.wikipedia.org/wiki/Memory

https://en.wikipedia.org/wiki/V1_Saliency_Hypothesis

https://en.wikipedia.org/wiki/Working_memory

https://en.wikipedia.org/wiki/Attention_deficit_hyperactivity_disorder

https://en.wikipedia.org/wiki/Nucleon

https://en.wikipedia.org/wiki/Basal_lamina

https://en.wikipedia.org/wiki/Peritoneum

https://en.wikipedia.org/wiki/Annelid

https://en.wikipedia.org/wiki/Observable_universe

https://en.wikipedia.org/wiki/Immediate_early_gene

https://en.wikipedia.org/wiki/Engram_(neuropsychology)

https://en.wikipedia.org/wiki/Multiple_trace_theory

https://en.wikipedia.org/wiki/Nuclear_fission

https://en.wikipedia.org/wiki/Chicago_Pile-1

https://en.wikipedia.org/wiki/Nuclear_power

https://en.wikipedia.org/wiki/Nuclear_fallout

https://en.wikipedia.org/wiki/Heavy_water

https://en.wikipedia.org/wiki/Oxidation_state

https://en.wikipedia.org/wiki/Ozone

https://en.wikipedia.org/wiki/trihydrogen_cation_universe

https://en.wikipedia.org/wiki/Agar_plate

https://en.wikipedia.org/wiki/Microbiological_culture

https://en.wikipedia.org/wiki/Culture_media

https://en.wikipedia.org/wiki/Suspension_(chemistry)

https://en.wikipedia.org/wiki/colloid

https://en.wikipedia.org/wiki/environment

https://en.wikipedia.org/wiki/STP

https://en.wikipedia.org/wiki/ocean

https://en.wikipedia.org/wiki/fluid_transition

glass crystal unit cell

n-cell pile

standard cell pile

cell component pile

component pile

biological pile

very small biological pile

https://en.wikipedia.org/wiki/Growth_medium

https://en.wikipedia.org/wiki/Glycerol

https://en.wikipedia.org/wiki/Pseudomonas

https://en.wikipedia.org/wiki/Lysogeny_broth

https://en.wikipedia.org/wiki/Yeast_extract

https://en.wikipedia.org/wiki/Cytophaga

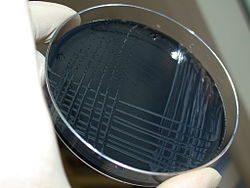

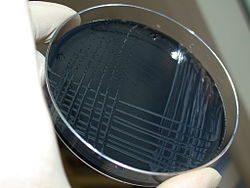

Blood-free, charcoal-based selective medium agar (CSM) for isolation of

Campylobacterhttps://en.wikipedia.org/wiki/Growth_medium

https://en.wikipedia.org/wiki/Activated_carbon

https://en.wikipedia.org/wiki/Coke_(fuel)

https://en.wikipedia.org/wiki/Coal_tar

https://en.wikipedia.org/wiki/Voltaic_pile

https://en.wikipedia.org/wiki/History_of_the_battery#Zinc-carbon_cell%2C_the_first_dry_cell

https://en.wikipedia.org/wiki/Blue_field_entoptic_phenomenon

https://en.wikipedia.org/wiki/Dry_cell

https://en.wikipedia.org/wiki/Atmospheric_circulation#Ferrel_cell

https://en.wikipedia.org/wiki/Charcoal_pile

https://en.wikipedia.org/wiki/Electroplating

https://en.wikipedia.org/wiki/Cell%E2%80%93cell_interaction

https://en.wikipedia.org/wiki/Electromotive_force#Electromotive_force_of_cells

https://en.wikipedia.org/wiki/Lemon_battery#Smee_cell

https://en.wikipedia.org/wiki/X-10_Graphite_Reactor

https://en.wikipedia.org/wiki/Brain_Cell_Repulsion

https://en.wikipedia.org/wiki/Memory_consolidation

https://en.wikipedia.org/wiki/Long-term_potentiation#Late_phase

https://en.wikipedia.org/wiki/Retrograde_amnesia

https://en.wikipedia.org/wiki/Anterograde_amnesia

https://en.wikipedia.org/wiki/Transient_global_amnesia

https://en.wikipedia.org/wiki/breeders_brood_mare

https://en.wikipedia.org/wiki/Breed

https://en.wikipedia.org/wiki/Breeder-circle

https://en.wikipedia.org/wiki/Brain_transplant

https://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface

Nicolelis

Miguel Nicolelis, a professor at Duke University, in Durham, North Carolina,

has been a prominent proponent of using multiple electrodes spread over

a greater area of the brain to obtain neuronal signals to drive a BCI.

After conducting initial studies in rats during the 1990s,

Nicolelis and his colleagues developed BCIs that decoded brain activity

in owl monkeys

and used the devices to reproduce monkey movements in robotic arms.

Monkeys have advanced reaching and grasping abilities and good hand

manipulation skills, making them ideal test subjects for this kind of

work.

By 2000, the group succeeded in building a BCI that reproduced owl monkey movements while the monkey operated a joystick or reached for food.[38] The BCI operated in real time and could also control a separate robot remotely over internet protocol. But the monkeys could not see the arm moving and did not receive any feedback, a so-called open-loop BCI.

Diagram of the BCI developed by Miguel Nicolelis and colleagues for use on

rhesus monkeysLater experiments by Nicolelis using rhesus monkeys succeeded in closing the feedback loop

and reproduced monkey reaching and grasping movements in a robot arm.

With their deeply cleft and furrowed brains, rhesus monkeys are

considered to be better models for human neurophysiology

than owl monkeys. The monkeys were trained to reach and grasp objects

on a computer screen by manipulating a joystick while corresponding

movements by a robot arm were hidden.[39][40]

The monkeys were later shown the robot directly and learned to control

it by viewing its movements. The BCI used velocity predictions to

control reaching movements and simultaneously predicted handgripping force.

In 2011 O'Doherty and colleagues showed a BCI with sensory feedback

with rhesus monkeys. The monkey was brain controlling the position of an

avatar arm while receiving sensory feedback through direct intracortical stimulation (ICMS) in the arm representation area of the sensory cortex.[41]

https://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface

https://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface

Vision

Invasive

BCI research has targeted repairing damaged sight and providing new

functionality for people with paralysis. Invasive BCIs are implanted

directly into the grey matter

of the brain during neurosurgery. Because they lie in the grey matter,

invasive devices produce the highest quality signals of BCI devices but

are prone to scar-tissue build-up, causing the signal to become weaker, or even non-existent, as the body reacts to a foreign object in the brain.[58]

In vision science, direct brain implants have been used to treat non-congenital (acquired) blindness. One of the first scientists to produce a working brain interface to restore sight was private researcher William Dobelle.

Dobelle's first prototype was implanted into "Jerry", a man blinded in

adulthood, in 1978. A single-array BCI containing 68 electrodes was

implanted onto Jerry's visual cortex and succeeded in producing phosphenes,

the sensation of seeing light. The system included cameras mounted on

glasses to send signals to the implant. Initially, the implant allowed

Jerry to see shades of grey in a limited field of vision at a low

frame-rate. This also required him to be hooked up to a mainframe computer,

but shrinking electronics and faster computers made his artificial eye

more portable and now enable him to perform simple tasks unassisted.[59]

https://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface

Pupil-size oscillation

A 2016 article[101] described an entirely new communication device and non-EEG-based human-computer interface, which requires no visual fixation, or ability to move the eyes at all. The interface is based on covert interest;

directing one's attention to a chosen letter on a virtual keyboard,

without the need to move one's eyes to look directly at the letter. Each

letter has its own (background) circle which micro-oscillates in

brightness differently from all of the other letters. The letter

selection is based on best fit between unintentional pupil-size

oscillation and the background circle's brightness oscillation pattern.

Accuracy is additionally improved by the user's mental rehearsing of the

words 'bright' and 'dark' in synchrony with the brightness transitions

of the letter's circle.

https://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface

Functional near-infrared spectroscopy

In 2014 and 2017, a BCI using functional near-infrared spectroscopy for "locked-in" patients with amyotrophic lateral sclerosis (ALS) was able to restore some basic ability of the patients to communicate with other people.[102][103]

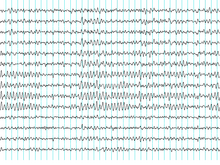

Electroencephalography (EEG)-based brain-computer interfaces

After the BCI challenge was stated by Vidal in 1973, the initial

reports on non-invasive approach included control of a cursor in 2D

using VEP (Vidal 1977), control of a buzzer using CNV (Bozinovska et al.

1988, 1990), control of a physical object, a robot, using a brain

rhythm (alpha) (Bozinovski et al. 1988), control of a text written on a

screen using P300 (Farwell and Donchin, 1988).[14]

In the early days of BCI research, another substantial barrier to using electroencephalography

(EEG) as a brain–computer interface was the extensive training required

before users can work the technology. For example, in experiments

beginning in the mid-1990s, Niels Birbaumer at the University of Tübingen in Germany trained severely paralysed people to self-regulate the slow cortical potentials in their EEG to such an extent that these signals could be used as a binary signal to control a computer cursor.[104] (Birbaumer had earlier trained epileptics

to prevent impending fits by controlling this low voltage wave.) The

experiment saw ten patients trained to move a computer cursor by

controlling their brainwaves. The process was slow, requiring more than

an hour for patients to write 100 characters with the cursor, while

training often took many months. However, the slow cortical potential

approach to BCIs has not been used in several years, since other

approaches require little or no training, are faster and more accurate,

and work for a greater proportion of users.

Another research parameter is the type of oscillatory activity

that is measured. Gert Pfurtscheller founded the BCI Lab 1991 and fed

his research results on motor imagery in the first online BCI based on

oscillatory features and classifiers. Together with Birbaumer and

Jonathan Wolpaw at New York State University

they focused on developing technology that would allow users to choose

the brain signals they found easiest to operate a BCI, including mu and beta rhythms.

A further parameter is the method of feedback used and this is shown in studies of P300 signals. Patterns of P300 waves are generated involuntarily (stimulus-feedback)

when people see something they recognize and may allow BCIs to decode

categories of thoughts without training patients first. By contrast, the

biofeedback methods described above require learning to control brainwaves so the resulting brain activity can be detected.

In 2005 it was reported research on EEG emulation of digital control circuits for BCI, with example of a CNV flip-flop.[105] In 2009 it was reported noninvasive EEG control of a robotic arm using a CNV flip-flop.[106] In 2011 it was reported control of two robotic arms solving Tower of Hanoi task with three disks using a CNV flip-flop.[107] In 2015 it was described EEG-emulation of a Schmitt trigger, flip-flop, demultiplexer, and modem.[108]

While an EEG based brain-computer interface has been pursued extensively

by a number of research labs, recent advancements made by Bin He and his team at the University of Minnesota

suggest the potential of an EEG based brain-computer interface to

accomplish tasks close to invasive brain-computer interface. Using

advanced functional neuroimaging including BOLD functional MRI and EEG

source imaging, Bin He and co-workers identified the co-variation and

co-localization of electrophysiological and hemodynamic signals induced

by motor imagination.[109]

Refined by a neuroimaging approach and by a training protocol, Bin He

and co-workers demonstrated the ability of a non-invasive EEG based

brain-computer interface to control the flight of a virtual helicopter

in 3-dimensional space, based upon motor imagination.[110]

In June 2013 it was announced that Bin He had developed the technique

to enable a remote-control helicopter to be guided through an obstacle

course.[111]

In addition to a brain-computer interface based on brain waves,

as recorded from scalp EEG electrodes, Bin He and co-workers explored a

virtual EEG signal-based brain-computer interface by first solving the

EEG inverse problem

and then used the resulting virtual EEG for brain-computer interface

tasks. Well-controlled studies suggested the merits of such a source

analysis based brain-computer interface.[112]

A 2014 study found that severely motor-impaired patients could

communicate faster and more reliably with non-invasive EEG BCI, than

with any muscle-based communication channel.[113]

A 2016 study found that the Emotiv EPOC device may be more

suitable for control tasks using the attention/meditation level or eye

blinking than the Neurosky MindWave device.[114]

A 2019 study found that the application of evolutionary

algorithms could improve EEG mental state classification with a

non-invasive Muse device, enabling high quality classification of data acquired by a cheap consumer-grade EEG sensing device.[115]

In a 2021 systematic review of randomized controlled trials using

BCI for upper-limb rehabilitation after stroke, EEG-based BCI was found

to have significant efficacy in improving upper-limb motor function

compared to control therapies. More specifically, BCI studies that

utilized band power features, motor imagery, and functional electrical

stimulation in their design were found to be more efficacious than

alternatives.[116]

Another 2021 systematic review focused on robotic-assisted EEG-based

BCI for hand rehabilitation after stroke. Improvement in motor

assessment scores was observed in three of eleven studies included in

the systematic review.[117]

Dry active electrode arrays

In the early 1990s Babak Taheri, at University of California, Davis

demonstrated the first single and also multichannel dry active

electrode arrays using micro-machining. The single channel dry EEG

electrode construction and results were published in 1994.[118] The arrayed electrode was also demonstrated to perform well compared to silver/silver chloride electrodes. The device consisted of four sites of sensors with integrated electronics to reduce noise by impedance matching.

The advantages of such electrodes are: (1) no electrolyte used, (2) no

skin preparation, (3) significantly reduced sensor size, and (4)

compatibility with EEG monitoring systems. The active electrode array is

an integrated system made of an array of capacitive sensors with local

integrated circuitry housed in a package with batteries to power the

circuitry. This level of integration was required to achieve the

functional performance obtained by the electrode.

The electrode was tested on an electrical test bench and on human

subjects in four modalities of EEG activity, namely: (1) spontaneous

EEG, (2) sensory event-related potentials, (3) brain stem potentials,

and (4) cognitive event-related potentials. The performance of the dry

electrode compared favorably with that of the standard wet electrodes in

terms of skin preparation, no gel requirements (dry), and higher

signal-to-noise ratio.[119]

In 1999 researchers at Case Western Reserve University, in Cleveland, Ohio, led by Hunter Peckham, used 64-electrode EEG skullcap to return limited hand movements to quadriplegic

Jim Jatich. As Jatich concentrated on simple but opposite concepts like

up and down, his beta-rhythm EEG output was analysed using software to

identify patterns in the noise. A basic pattern was identified and used

to control a switch: Above average activity was set to on, below average

off. As well as enabling Jatich to control a computer cursor the

signals were also used to drive the nerve controllers embedded in his

hands, restoring some movement.[120]

SSVEP mobile EEG BCIs

In

2009, the NCTU Brain-Computer-Interface-headband was reported. The

researchers who developed this BCI-headband also engineered

silicon-based microelectro-mechanical system (MEMS) dry electrodes designed for application in non-hairy sites of the body. These electrodes were secured to the DAQ board in the headband with snap-on electrode holders. The signal processing module measured alpha

activity and the Bluetooth enabled phone assessed the patients'

alertness and capacity for cognitive performance. When the subject

became drowsy, the phone sent arousing feedback to the operator to rouse

them. This research was supported by the National Science Council,

Taiwan, R.O.C., NSC, National Chiao-Tung University, Taiwan's Ministry

of Education, and the U.S. Army Research Laboratory.[121]

In 2011, researchers reported a cellular based BCI with the

capability of taking EEG data and converting it into a command to cause

the phone to ring. This research was supported in part by Abraxis Bioscience

LLP, the U.S. Army Research Laboratory, and the Army Research Office.

The developed technology was a wearable system composed of a four

channel bio-signal acquisition/amplification module,

a wireless transmission module, and a Bluetooth enabled cell phone.

The electrodes were placed so that they pick up steady state visual

evoked potentials (SSVEPs).[122] SSVEPs are electrical responses to flickering visual stimuli with repetition rates over 6 Hz[122] that are best found in the parietal and occipital scalp regions of the visual cortex.[123][124][125]

It was reported that with this BCI setup, all study participants were

able to initiate the phone call with minimal practice in natural

environments.[126]

The scientists claim that their studies using a single channel fast Fourier transform (FFT) and multiple channel system canonical correlation analysis (CCA) algorithm support the capacity of mobile BCIs.[122][127]

The CCA algorithm has been applied in other experiments investigating

BCIs with claimed high performance in accuracy as well as speed.[128]

While the cellular based BCI technology was developed to initiate a

phone call from SSVEPs, the researchers said that it can be translated

for other applications, such as picking up sensorimotor mu/beta rhythms to function as a motor-imagery based BCI.[122]

In 2013, comparative tests were performed on android cell phone, tablet, and computer based BCIs, analyzing the power spectrum density

of resultant EEG SSVEPs. The stated goals of this study, which involved

scientists supported in part by the U.S. Army Research Laboratory, were

to "increase the practicability, portability, and ubiquity of an

SSVEP-based BCI, for daily use". Citation It was reported that the

stimulation frequency on all mediums was accurate, although the cell

phone's signal demonstrated some instability. The amplitudes of the

SSVEPs for the laptop and tablet were also reported to be larger than

those of the cell phone. These two qualitative characterizations were

suggested as indicators of the feasibility of using a mobile stimulus

BCI.[127]

Limitations

In

2011, researchers stated that continued work should address ease of

use, performance robustness, reducing hardware and software costs.[122]

One of the difficulties with EEG readings is the large susceptibility to motion artifacts.[129]

In most of the previously described research projects, the participants

were asked to sit still, reducing head and eye movements as much as

possible, and measurements were taken in a laboratory setting. However,

since the emphasized application of these initiatives had been in

creating a mobile device for daily use,[127] the technology had to be tested in motion.

In 2013, researchers tested mobile EEG-based BCI technology,

measuring SSVEPs from participants as they walked on a treadmill at

varying speeds. This research was supported by the Office of Naval Research,

Army Research Office, and the U.S. Army Research Laboratory. Stated

results were that as speed increased the SSVEP detectability using CCA

decreased. As independent component analysis (ICA) had been shown to be efficient in separating EEG signals from noise,[130]

the scientists applied ICA to CCA extracted EEG data. They stated that

the CCA data with and without ICA processing were similar. Thus, they

concluded that CCA independently demonstrated a robustness to motion

artifacts that indicates it may be a beneficial algorithm to apply to

BCIs used in real world conditions.[124]

One of the major problems in EEG-based BCI applications is the low

spatial resolution. Several solutions have been suggested to address

this issue since 2019, which include: EEG source connectivity based on

graph theory, EEG pattern recognition based on Topomap, EEG-fMRI fusion,

and so on.

Prosthesis and environment control

Non-invasive

BCIs have also been applied to enable brain-control of prosthetic upper

and lower extremity devices in people with paralysis. For example, Gert

Pfurtscheller of Graz University of Technology and colleagues demonstrated a BCI-controlled functional electrical stimulation system to restore upper extremity movements in a person with tetraplegia due to spinal cord injury.[131] Between 2012 and 2013, researchers at the University of California, Irvine

demonstrated for the first time that it is possible to use BCI

technology to restore brain-controlled walking after spinal cord injury.

In their spinal cord injury research study, a person with paraplegia was able to operate a BCI-robotic gait orthosis to regain basic brain-controlled ambulation.[132][133]

In 2009 Alex Blainey, an independent researcher based in the UK, successfully used the Emotiv EPOC to control a 5 axis robot arm.[134] He then went on to make several demonstration mind controlled wheelchairs and home automation that could be operated by people with limited or no motor control such as those with paraplegia and cerebral palsy.

Research into military use of BCIs funded by DARPA has been ongoing since the 1970s.[3][4] The current focus of research is user-to-user communication through analysis of neural signals.[135]

DIY and open source BCI

In 2001, The OpenEEG Project[136]

was initiated by a group of DIY neuroscientists and engineers. The

ModularEEG was the primary device created by the OpenEEG community; it

was a 6-channel signal capture board that cost between $200 and $400 to

make at home. The OpenEEG Project marked a significant moment in the

emergence of DIY brain-computer interfacing.

In 2010, the Frontier Nerds of NYU's ITP program published a thorough tutorial titled How To Hack Toy EEGs.[137]

The tutorial, which stirred the minds of many budding DIY BCI

enthusiasts, demonstrated how to create a single channel at-home EEG

with an Arduino and a Mattel Mindflex at a very reasonable price. This tutorial amplified the DIY BCI movement.

MEG and MRI

ATR Labs' reconstruction of human vision using

fMRI (top row: original image; bottom row: reconstruction from mean of combined readings)

Magnetoencephalography (MEG) and functional magnetic resonance imaging (fMRI) have both been used successfully as non-invasive BCIs.[138] In a widely reported experiment, fMRI allowed two users being scanned to play Pong in real-time by altering their haemodynamic response or brain blood flow through biofeedback techniques.[139]

fMRI measurements of haemodynamic responses in real time have

also been used to control robot arms with a seven-second delay between

thought and movement.[140]

In 2008 research developed in the Advanced Telecommunications Research (ATR) Computational Neuroscience Laboratories in Kyoto,

Japan, allowed the scientists to reconstruct images directly from the

brain and display them on a computer in black and white at a resolution of 10x10 pixels. The article announcing these achievements was the cover story of the journal Neuron of 10 December 2008.[141]

In 2011 researchers from UC Berkeley published[142]

a study reporting second-by-second reconstruction of videos watched by

the study's subjects, from fMRI data. This was achieved by creating a

statistical model relating visual patterns in videos shown to the

subjects, to the brain activity caused by watching the videos. This

model was then used to look up the 100 one-second video segments, in a

database of 18 million seconds of random YouTube

videos, whose visual patterns most closely matched the brain activity

recorded when subjects watched a new video. These 100 one-second video

extracts were then combined into a mashed-up image that resembled the

video being watched.[143][144][145]

BCI control strategies in neurogaming

Motor imagery

Motor imagery involves the imagination of the movement of various body parts resulting in sensorimotor cortex

activation, which modulates sensorimotor oscillations in the EEG. This

can be detected by the BCI to infer a user's intent. Motor imagery

typically requires a number of sessions of training before acceptable

control of the BCI is acquired. These training sessions may take a

number of hours over several days before users can consistently employ

the technique with acceptable levels of precision. Regardless of the

duration of the training session, users are unable to master the control

scheme. This results in very slow pace of the gameplay.[146]

Advanced machine learning methods were recently developed to compute a

subject-specific model for detecting the performance of motor imagery.

The top performing algorithm from BCI Competition IV[147] dataset 2 for motor imagery is the Filter Bank Common Spatial Pattern, developed by Ang et al. from A*STAR, Singapore.[148]

Bio/neurofeedback for passive BCI designs

Biofeedback

is used to monitor a subject's mental relaxation. In some cases,

biofeedback does not monitor electroencephalography (EEG), but instead

bodily parameters such as electromyography (EMG), galvanic skin resistance (GSR), and heart rate variability (HRV). Many biofeedback systems are used to treat certain disorders such as attention deficit hyperactivity disorder (ADHD),

sleep problems in children, teeth grinding, and chronic pain. EEG

biofeedback systems typically monitor four different bands (theta:

4–7 Hz, alpha:8–12 Hz, SMR: 12–15 Hz, beta: 15–18 Hz) and challenge the

subject to control them. Passive BCI[55]

involves using BCI to enrich human–machine interaction with implicit

information on the actual user's state, for example, simulations to

detect when users intend to push brakes during an emergency car stopping

procedure. Game developers using passive BCIs need to acknowledge that

through repetition of game levels the user's cognitive state will change

or adapt. Within the first play

of a level, the user will react to things differently from during the

second play: for example, the user will be less surprised at an event in

the game if they are expecting it.[146]

Visual evoked potential (VEP)

A

VEP is an electrical potential recorded after a subject is presented

with a type of visual stimuli. There are several types of VEPs.

Steady-state visually evoked potentials (SSVEPs) use potentials generated by exciting the retina,

using visual stimuli modulated at certain frequencies. SSVEP's stimuli

are often formed from alternating checkerboard patterns and at times

simply use flashing images. The frequency of the phase reversal of the

stimulus used can be clearly distinguished in the spectrum of an EEG;

this makes detection of SSVEP stimuli relatively easy. SSVEP has proved

to be successful within many BCI systems. This is due to several

factors, the signal elicited is measurable in as large a population as

the transient VEP and blink movement and electrocardiographic artefacts

do not affect the frequencies monitored. In addition, the SSVEP signal

is exceptionally robust; the topographic organization of the primary

visual cortex is such that a broader area obtains afferents from the

central or fovial region of the visual field. SSVEP does have several

problems however. As SSVEPs use flashing stimuli to infer a user's

intent, the user must gaze at one of the flashing or iterating symbols

in order to interact with the system. It is, therefore, likely that the

symbols could become irritating and uncomfortable to use during longer

play sessions, which can often last more than an hour which may not be

an ideal gameplay.

Another type of VEP used with applications is the P300 potential.

The P300 event-related potential is a positive peak in the EEG that

occurs at roughly 300 ms after the appearance of a target stimulus (a

stimulus for which the user is waiting or seeking) or oddball stimuli.

The P300 amplitude decreases as the target stimuli and the ignored

stimuli grow more similar.The P300 is thought to be related to a higher

level attention process or an orienting response using P300 as a control

scheme has the advantage of the participant only having to attend

limited training sessions. The first application to use the P300 model

was the P300 matrix. Within this system, a subject would choose a letter

from a grid of 6 by 6 letters and numbers. The rows and columns of the

grid flashed sequentially and every time the selected "choice letter"

was illuminated the user's P300 was (potentially) elicited. However, the

communication process, at approximately 17 characters per minute, was

quite slow. The P300 is a BCI that offers a discrete selection rather

than a continuous control mechanism. The advantage of P300 use within

games is that the player does not have to teach himself/herself how to

use a completely new control system and so only has to undertake short

training instances, to learn the gameplay mechanics and basic use of the

BCI paradigm.[146]

Synthetic telepathy/silent communication

In a $6.3 million US Army initiative to invent devices for telepathic communication, Gerwin Schalk,

underwritten in a $2.2 million grant, found the use of ECoG signals can

discriminate the vowels and consonants embedded in spoken and imagined

words, shedding light on the distinct mechanisms associated with

production of vowels and consonants, and could provide the basis for

brain-based communication using imagined speech.[97][149]

In 2002 Kevin Warwick

had an array of 100 electrodes fired into his nervous system in order

to link his nervous system into the Internet to investigate enhancement

possibilities. With this in place Warwick successfully carried out a

series of experiments. With electrodes also implanted into his wife's

nervous system, they conducted the first direct electronic communication

experiment between the nervous systems of two humans.[150][151][152][153]

Another group of researchers was able to achieve conscious

brain-to-brain communication between two people separated by a distance

using non-invasive technology that was in contact with the scalp of the

participants. The words were encoded by binary streams using the

sequences of 0's and 1's by the imaginary motor input of the person

"emitting" the information. As the result of this experiment,

pseudo-random bits of the information carried encoded words "hola" ("hi"

in Spanish) and "ciao" ("goodbye" in Italian) and were transmitted

mind-to-mind between humans separated by a distance, with blocked motor

and sensory systems, which has low to no probability of this happening

by chance.Conscious Brain-to-Brain Communication in Humans Using Non-Invasive Technologies

In the 1960s a researcher was successful after some training in

using EEG to create Morse code using their brain alpha waves. Research

funded by the US army is being conducted with the goal of allowing users

to compose a message in their head, then transfer that message with

just the power of thought to a particular individual.[154] On 27 February 2013 the group with Miguel Nicolelis at Duke University

and IINN-ELS successfully connected the brains of two rats with

electronic interfaces that allowed them to directly share information,

in the first-ever direct brain-to-brain interface.[155][156][157]

Cell-culture BCIs

Researchers have built devices to interface with neural cells and

entire neural networks in cultures outside animals. As well as

furthering research on animal implantable devices, experiments on

cultured neural tissue have focused on building problem-solving

networks, constructing basic computers and manipulating robotic devices.

Research into techniques for stimulating and recording from individual

neurons grown on semiconductor chips is sometimes referred to as

neuroelectronics or neurochips.[158]

The world's first

Neurochip, developed by

Caltech researchers Jerome Pine and Michael Maher

Development of the first working neurochip was claimed by a Caltech team led by Jerome Pine and Michael Maher in 1997.[159] The Caltech chip had room for 16 neurons.

In 2003 a team led by Theodore Berger, at the University of Southern California, started work on a neurochip designed to function as an artificial or prosthetic hippocampus.

The neurochip was designed to function in rat brains and was intended

as a prototype for the eventual development of higher-brain prosthesis.

The hippocampus was chosen because it is thought to be the most ordered

and structured part of the brain and is the most studied area. Its

function is to encode experiences for storage as long-term memories

elsewhere in the brain.[160]

In 2004 Thomas DeMarse at the University of Florida used a culture of 25,000 neurons taken from a rat's brain to fly a F-22 fighter jet aircraft simulator.[161] After collection, the cortical neurons were cultured in a petri dish

and rapidly began to reconnect themselves to form a living neural

network. The cells were arranged over a grid of 60 electrodes and used

to control the pitch and yaw

functions of the simulator. The study's focus was on understanding how

the human brain performs and learns computational tasks at a cellular

level.

Collaborative BCIs

The

idea of combining/integrating brain signals from multiple individuals

was introduced at Humanity+ @Caltech, in December 2010, by a Caltech researcher at JPL, Adrian Stoica; Stoica referred to the concept as multi-brain aggregation.[162][163][164] A provisional patent application was filed on January 19, 2011, with the non-provisional patent following one year later.[165] In May 2011, Yijun Wang and Tzyy-Ping Jung published, "A Collaborative Brain-Computer Interface for Improving Human Performance", and in January 2012 Miguel Eckstein published, "Neural decoding of collective wisdom with multi-brain computing".[166][167] Stoica's first paper on the topic appeared in 2012, after the publication of his patent application.[168]

Given the timing of the publications between the patent and papers,

Stoica, Wang & Jung, and Eckstein independently pioneered the

concept, and are all considered as founders of the field. Later, Stoica

would collaborate with University of Essex researchers, Riccardo Poli and Caterina Cinel.[169][170] The work was continued by Poli and Cinel, and their students: Ana Matran-Fernandez, Davide Valeriani, and Saugat Bhattacharyya.[171][172][173]

Ethical considerations

Sources:[174][175][176][177][178]

User-centric issues

- Long-term effects to the user remain largely unknown.

- Obtaining informed consent from people who have difficulty communicating.

- The consequences of BCI technology for the quality of life of patients and their families.

- Health-related side-effects (e.g. neurofeedback of sensorimotor rhythm training is reported to affect sleep quality).

- Therapeutic applications and their potential misuse.

- Safety risks

- Non-convertibility of some of the changes made to the brain

Legal and social

- Issues

of accountability and responsibility: claims that the influence of BCIs

overrides free will and control over sensory-motor actions, claims that

cognitive intention was inaccurately translated due to a BCI

malfunction.

- Personality changes involved caused by deep-brain stimulation.

- Concerns regarding the state of becoming a "cyborg" - having parts of the body that are living and parts that are mechanical.

- Questions personality: what does it mean to be a human?

- Blurring of the division between human and machine and inability to distinguish between human vs. machine-controlled actions.

- Use of the technology in advanced interrogation techniques by governmental authorities.

- Selective enhancement and social stratification.

- Questions of research ethics regarding animal experimentation

- Questions of research ethics that arise when progressing from animal experimentation to application in human subjects.

- Moral questions

- Mind reading and privacy.

- Tracking and "tagging system"

- Mind control.

- Movement control

- Emotion control

In their current form, most BCIs are far removed from the ethical

issues considered above. They are actually similar to corrective

therapies in function. Clausen stated in 2009 that "BCIs pose ethical

challenges, but these are conceptually similar to those that

bioethicists have addressed for other realms of therapy".[174]

Moreover, he suggests that bioethics is well-prepared to deal with the

issues that arise with BCI technologies. Haselager and colleagues[175]

pointed out that expectations of BCI efficacy and value play a great

role in ethical analysis and the way BCI scientists should approach

media. Furthermore, standard protocols can be implemented to ensure

ethically sound informed-consent procedures with locked-in patients.

The case of BCIs today has parallels in medicine, as will its

evolution. Similar to how pharmaceutical science began as a balance for

impairments and is now used to increase focus and reduce need for sleep,

BCIs will likely transform gradually from therapies to enhancements.[177]

Efforts are made inside the BCI community to create consensus on

ethical guidelines for BCI research, development and dissemination.[178]

As innovation continues, ensuring equitable access to BCIs will be

crucial, failing which generational inequalities can arise which can

adversely affect the right to human flourishing.[179]

The ethical considerations of BCIs are essential to the

development of future implanted devices. End-users, ethicists,

researchers, funding agencies, physicians, corporations, and all others

involved in BCI use should consider the anticipated, and unanticipated,

changes that BCIs will have on human autonomy, identity, privacy, and

more.[71]

Low-cost BCI-based interfaces

Recently a number of companies have scaled back medical grade EEG

technology to create inexpensive BCIs for research as well as

entertainment purposes. For example, toys such as the NeuroSky and

Mattel MindFlex have seen some commercial success.

- In 2006 Sony patented a neural interface system allowing radio waves to affect signals in the neural cortex.[180]

- In 2007 NeuroSky

released the first affordable consumer based EEG along with the game

NeuroBoy. This was also the first large scale EEG device to use dry

sensor technology.[181]

- In 2008 OCZ Technology developed a device for use in video games relying primarily on electromyography.[182]

- In 2008 Final Fantasy developer Square Enix announced that it was partnering with NeuroSky to create a game, Judecca.[183][184]

- In 2009 Mattel partnered with NeuroSky to release the Mindflex, a game that used an EEG to steer a ball through an obstacle course. It is by far the best selling consumer based EEG to date.[183][185]

- In 2009 Uncle Milton Industries partnered with NeuroSky to release the Star Wars Force Trainer, a game designed to create the illusion of possessing the Force.[183][186]

- In 2009 Emotiv

released the EPOC, a 14 channel EEG device that can read 4 mental

states, 13 conscious states, facial expressions, and head movements. The

EPOC is the first commercial BCI to use dry sensor technology, which

can be dampened with a saline solution for a better connection.[187]

- In November 2011 Time magazine selected "necomimi" produced by Neurowear

as one of the best inventions of the year. The company announced that

it expected to launch a consumer version of the garment, consisting of

catlike ears controlled by a brain-wave reader produced by NeuroSky, in spring 2012.[188]

- In February 2014 They Shall Walk (a nonprofit organization fixed on

constructing exoskeletons, dubbed LIFESUITs, for paraplegics and

quadriplegics) began a partnership with James W. Shakarji on the

development of a wireless BCI.[189]

- In 2016, a group of hobbyists developed an open-source BCI board

that sends neural signals to the audio jack of a smartphone, dropping

the cost of entry-level BCI to £20.[190] Basic diagnostic software is available for Android devices, as well as a text entry app for Unity.[191]

- In 2020, NextMind released a dev kit including an EEG headset with dry electrodes at $399.[192][193]

The device can be played with some demo applications or developers can

create their own use cases using the provided Software Development Kit.

Future directions

A consortium consisting of 12 European partners has completed a

roadmap to support the European Commission in their funding decisions

for the new framework program Horizon 2020. The project, which was funded by the European Commission, started in November 2013 and published a roadmap in April 2015.[194]

A 2015 publication led by Dr. Clemens Brunner describes some of the

analyses and achievements of this project, as well as the emerging

Brain-Computer Interface Society.[195]

For example, this article reviewed work within this project that

further defined BCIs and applications, explored recent trends, discussed

ethical issues, and evaluated different directions for new BCIs.

Other recent publications too have explored future BCI directions for new groups of disabled users (e.g.,[11][196])

Disorders of consciousness (DOC)

Some persons have a disorder of consciousness

(DOC). This state is defined to include persons with coma, as well as

persons in a vegetative state (VS) or minimally conscious state (MCS).

New BCI research seeks to help persons with DOC in different ways. A key

initial goal is to identify patients who are able to perform basic

cognitive tasks, which would of course lead to a change in their

diagnosis. That is, some persons who are diagnosed with DOC may in fact

be able to process information and make important life decisions (such

as whether to seek therapy, where to live, and their views on

end-of-life decisions regarding them). Some persons who are diagnosed

with DOC die as a result of end-of-life decisions, which may be made by

family members who sincerely feel this is in the patient's best

interests. Given the new prospect of allowing these patients to provide

their views on this decision, there would seem to be a strong ethical

pressure to develop this research direction to guarantee that DOC

patients are given an opportunity to decide whether they want to live.[197][198]

These and other articles describe new challenges and solutions to

use BCI technology to help persons with DOC. One major challenge is

that these patients cannot use BCIs based on vision. Hence, new tools

rely on auditory and/or vibrotactile stimuli. Patients may wear

headphones and/or vibrotactile stimulators placed on the wrists, neck,

leg, and/or other locations. Another challenge is that patients may fade

in and out of consciousness, and can only communicate at certain times.

This may indeed be a cause of mistaken diagnosis. Some patients may

only be able to respond to physicians' requests during a few hours per

day (which might not be predictable ahead of time) and thus may have

been unresponsive during diagnosis. Therefore, new methods rely on tools

that are easy to use in field settings, even without expert help, so

family members and other persons without any medical or technical

background can still use them. This reduces the cost, time, need for

expertise, and other burdens with DOC assessment. Automated tools can

ask simple questions that patients can easily answer, such as "Is your

father named George?" or "Were you born in the USA?" Automated

instructions inform patients that they may convey yes or no by (for

example) focusing their attention on stimuli on the right vs. left

wrist. This focused attention produces reliable changes in EEG patterns

that can help determine that the patient is able to communicate. The

results could be presented to physicians and therapists, which could

lead to a revised diagnosis and therapy. In addition, these patients

could then be provided with BCI-based communication tools that could

help them convey basic needs, adjust bed position and HVAC (heating, ventilation, and air conditioning), and otherwise empower them to make major life decisions and communicate.[199][200][201]

Motor recovery

People

may lose some of their ability to move due to many causes, such as

stroke or injury. Research in recent years has demonstrated the utility

of EEG-based BCI systems in aiding motor recovery and

neurorehabilitation in patients who have had a stroke.[202][203][204][205] Several groups have explored systems and methods for motor recovery that include BCIs.[206][207][208][209]

In this approach, a BCI measures motor activity while the patient

imagines or attempts movements as directed by a therapist. The BCI may

provide two benefits: (1) if the BCI indicates that a patient is not

imagining a movement correctly (non-compliance), then the BCI could

inform the patient and therapist; and (2) rewarding feedback such as

functional stimulation or the movement of a virtual avatar also depends

on the patient's correct movement imagery.

So far, BCIs for motor recovery have relied on the EEG to measure

the patient's motor imagery. However, studies have also used fMRI to

study different changes in the brain as persons undergo BCI-based stroke

rehab training.[210][211][212]

Imaging studies combined with EEG-based BCI systems hold promise for

investigating neuroplasticity during motor recovery post-stroke.[212]

Future systems might include the fMRI and other measures for real-time

control, such as functional near-infrared, probably in tandem with EEGs.

Non-invasive brain stimulation has also been explored in combination

with BCIs for motor recovery.[213] In 2016, scientists out of the University of Melbourne

published preclinical proof-of-concept data related to a potential

brain-computer interface technology platform being developed for

patients with paralysis to facilitate control of external devices such

as robotic limbs, computers and exoskeletons by translating brain

activity.[214][215] Clinical trials are currently underway.[216]

Functional brain mapping

Each year, about 400,000 people undergo brain mapping during neurosurgery. This procedure is often required for people with tumors or epilepsy that do not respond to medication.[217]

During this procedure, electrodes are placed on the brain to precisely

identify the locations of structures and functional areas. Patients may

be awake during neurosurgery and asked to perform certain tasks, such as

moving fingers or repeating words. This is necessary so that surgeons

can remove only the desired tissue while sparing other regions, such as

critical movement or language regions. Removing too much brain tissue

can cause permanent damage, while removing too little tissue can leave

the underlying condition untreated and require additional neurosurgery.

Thus, there is a strong need to improve both methods and systems to map

the brain as effectively as possible.

In several recent publications, BCI research experts and medical

doctors have collaborated to explore new ways to use BCI technology to

improve neurosurgical mapping. This work focuses largely on high gamma

activity, which is difficult to detect with non-invasive means. Results

have led to improved methods for identifying key areas for movement,

language, and other functions. A recent article addressed advances in

functional brain mapping and summarizes a workshop.[218]

Flexible devices

Flexible electronics are polymers or other flexible materials (e.g. silk,[219] pentacene, PDMS, Parylene, polyimide[220]) that are printed with circuitry; the flexible nature of the organic background materials allowing the electronics created to bend, and the fabrication techniques used to create these devices resembles those used to create integrated circuits and microelectromechanical systems (MEMS).[citation needed] Flexible electronics were first developed in the 1960s and 1970s, but research interest increased in the mid-2000s.[221]

Flexible neural interfaces have been extensively tested in recent

years in an effort to minimize brain tissue trauma related to

mechanical mismatch between electrode and tissue.[222] Minimizing tissue trauma could, in theory, extend the lifespan of BCIs relying on flexible electrode-tissue interfaces.

Neural dust

Neural dust is a term used to refer to millimeter-sized devices operated as wirelessly powered nerve sensors that were proposed in a 2011 paper from the University of California, Berkeley Wireless Research Center, which described both the challenges and outstanding benefits of creating a long lasting wireless BCI.[223][224] In one proposed model of the neural dust sensor, the transistor model allowed for a method of separating between local field potentials and action potential "spikes", which would allow for a greatly diversified wealth of data acquirable from the recordings.[223]

See also

Informatics

Intendix (2009)

AlterEgo, a system that reads unspoken verbalizations and responds with bone-conduction headphones

Augmented learning

Biological machine

Cortical implants

Deep brain stimulation

Human senses

Kernel (neurotechnology company)

Lie detection

Microwave auditory effect

Neural engineering

Neuralink

Neurorobotics

Neurostimulation

Nootropic

Project Cyborg

Simulated reality

Telepresence

Thought identification

Wetware computer (Uses similar technology for IO)

Whole brain emulation

https://en.wikipedia.org/wiki/Microwave_auditory_effect

https://en.wikipedia.org/wiki/Telepresence

https://en.wikipedia.org/wiki/Brain-reading

https://en.wikipedia.org/wiki/Mind_uploading

https://en.wikipedia.org/wiki/Molecular_machine#Biological

https://en.wikipedia.org/wiki/Functional_near-infrared_spectroscopy

https://en.wikipedia.org/wiki/Patch_clamp

https://en.wikipedia.org/wiki/Mainframe_computer

https://en.wikipedia.org/wiki/Phosphene

https://en.wikipedia.org/wiki/Transfection

https://en.wikipedia.org/wiki/Light-gated_ion_channel

https://en.wikipedia.org/wiki/Channelrhodopsin

https://en.wikipedia.org/wiki/Scar

https://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface#Synthetic_telepathy/silent_communication

https://en.wikipedia.org/wiki/Sensory_substitution

https://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface

https://en.wikipedia.org/wiki/Connectomics

https://en.wikipedia.org/wiki/Systems_neuroscience

https://en.wikipedia.org/wiki/Neuropathology

https://en.wikipedia.org/wiki/Neuro-ophthalmology

https://en.wikipedia.org/wiki/Neuroradiology

https://en.wikipedia.org/wiki/Neurosurgery

https://en.wikipedia.org/wiki/Neurovirology

https://en.wikipedia.org/wiki/Chronobiology

https://en.wikipedia.org/wiki/Molecular_cellular_cognition

https://en.wikipedia.org/wiki/Global_neurosurgery

https://en.wikipedia.org/wiki/Neuroanthropology

https://en.wikipedia.org/wiki/Neural_engineering

https://en.wikipedia.org/wiki/Neurotechnology

https://en.wikipedia.org/wiki/Neurocriminology

https://en.wikipedia.org/wiki/Neuroesthetics

https://en.wikipedia.org/wiki/Neuroethics

https://en.wikipedia.org/wiki/Neuroethology

https://en.wikipedia.org/wiki/Neuromorphic_engineering

https://en.wikipedia.org/wiki/Neurophenomenology

https://en.wikipedia.org/wiki/Artificial_neural_network

https://en.wikipedia.org/wiki/Neural_circuit

https://en.wikipedia.org/wiki/Neurochip

https://en.wikipedia.org/wiki/Neurodegenerative_disease

https://en.wikipedia.org/wiki/Neurodevelopmental_disorder

https://en.wikipedia.org/wiki/Neurogenesis

https://en.wikipedia.org/wiki/Neuroimaging

https://en.wikipedia.org/wiki/Neuromodulation

https://en.wikipedia.org/wiki/Neurotoxin

https://en.wikipedia.org/wiki/Arsenic

https://en.wikipedia.org/wiki/marburg

https://en.wikipedia.org/wiki/de-boning/de-blooding

https://en.wikipedia.org/wiki/fluid-generator-removal

https://en.wikipedia.org/wiki/Insecticide

https://en.wikipedia.org/wiki/Ammunition

https://en.wikipedia.org/wiki/Envelope_(mathematics)

draft